The AI Healthcare Gold Rush - Promise, Peril, and What the Data Actually Shows

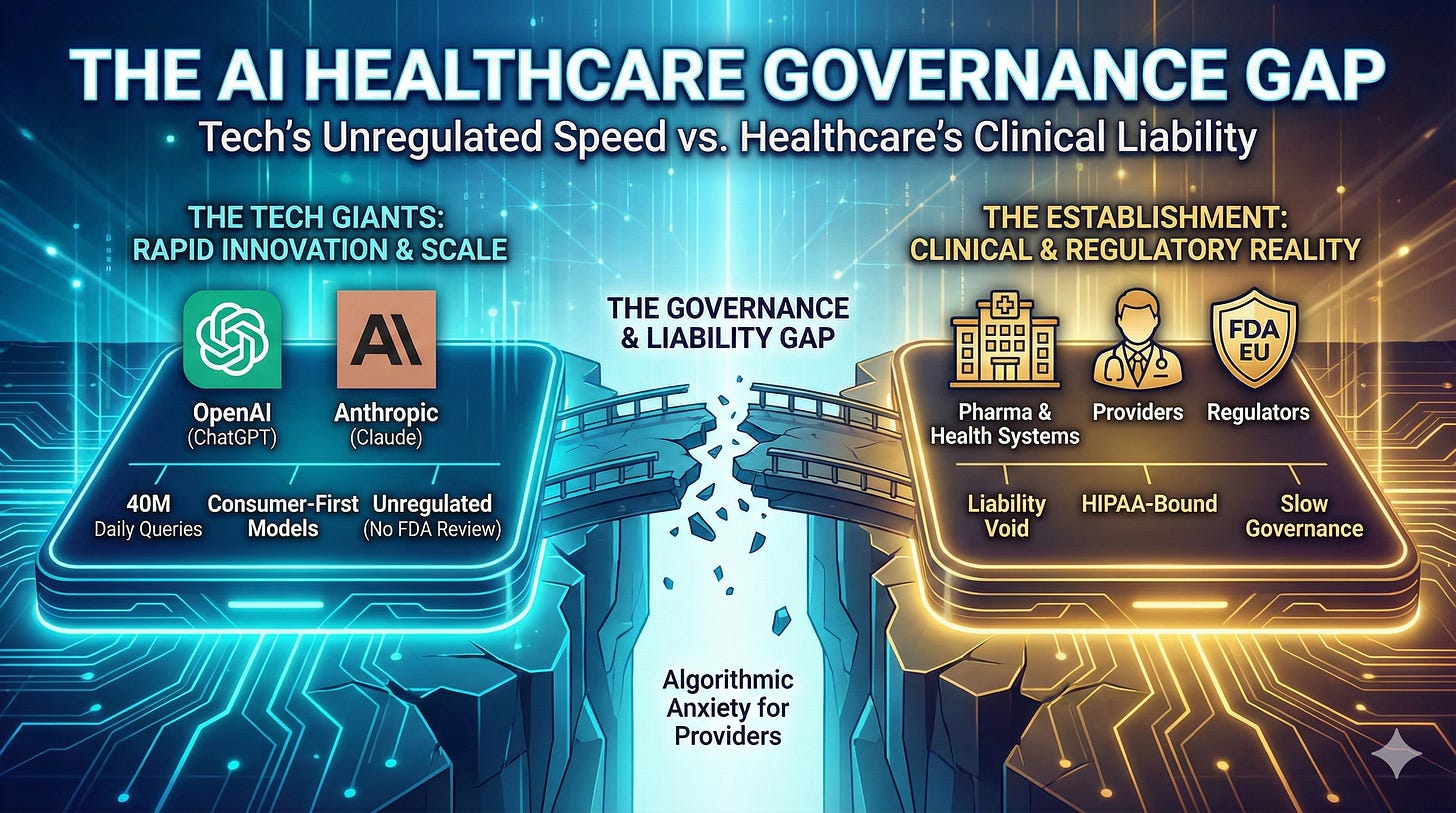

Inside the $14.2 billion bet on healthcare AI—and the governance gap no one's solving

In my recent LinkedIn article, “The AI-Augmented Patient,” I examined the early signals from OpenAI and Anthropic’s healthcare AI launches and their implications for patients, providers, and pharma. Here, I want to go deeper—into the data, the critical coverage, and the strategic questions that will define whether this moment becomes transformative or cautionary.

January 2026 may be remembered as the month healthcare AI moved from experimental to infrastructural—or as the month the hype cycle peaked before the reckoning. The data tells a more complex story than either the boosters or the skeptics acknowledge.

This analysis is for readers who want the full picture: the capital flows, the accuracy benchmarks, the regulatory gaps, and the strategic questions that will separate the winners from the cautionary tales.

Let’s dig in now!

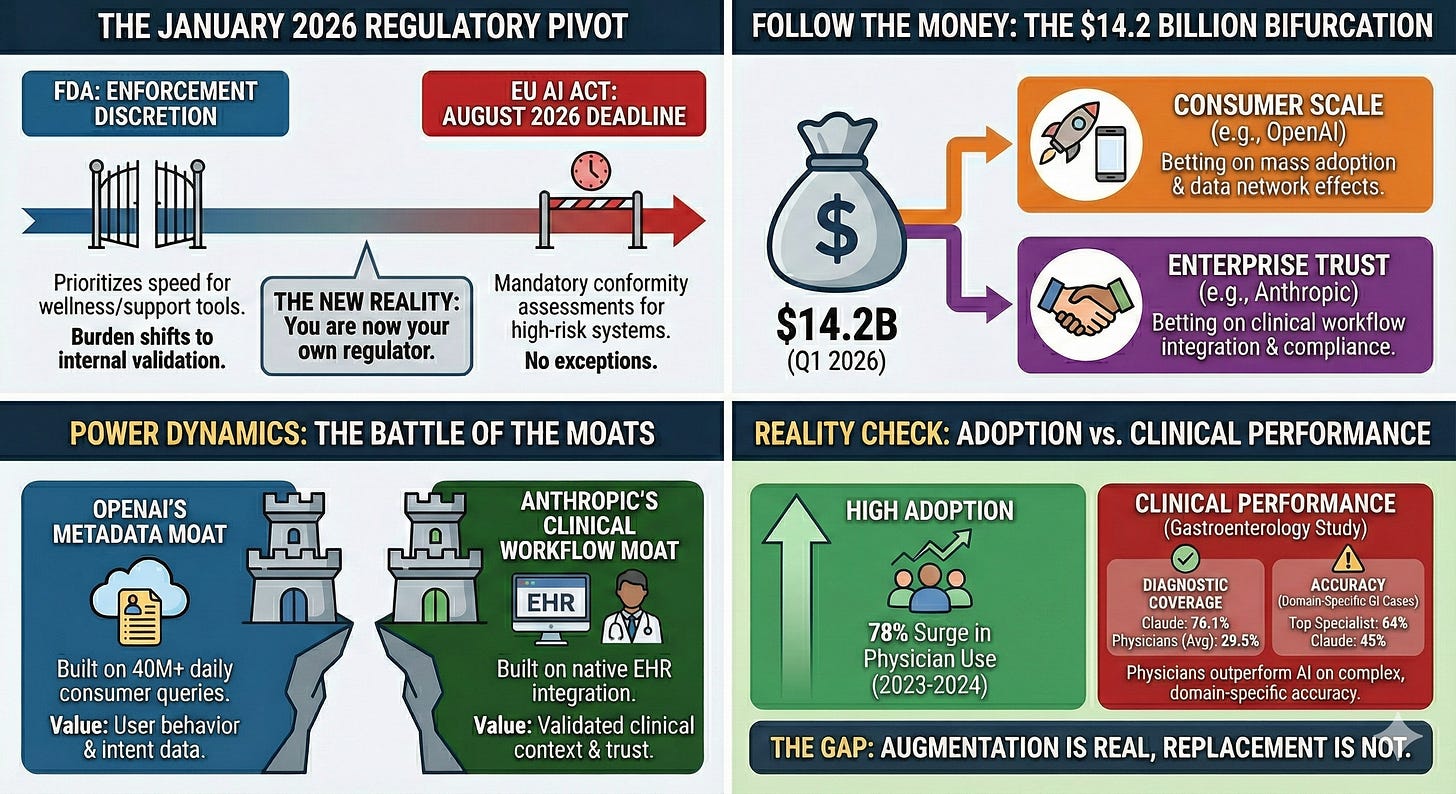

Infographic generated by the author using Nano Banana Pro (February 2026)

📋 TL;DR: Healthcare AI is now infrastructure, not experiment - but the governance gap is widening faster than the technology gap.

📊 Evidence: $14.2B in digital health funding (2025);1 54% to AI startups;1 66% of physicians now using AI in practice.3 40M+ daily health queries on ChatGPT alone.5

➡️ Implication: The surge in capital and AI adoption signals that healthcare AI is inevitable. First-mover advantage accrues to organizations with governance frameworks - not just technology deployments.

✅ Your Move: Organizations that wait for regulatory clarity will cede position. Those that deploy without governance will accumulate risk. Start building the governance and validation frameworks before they are mandated, or watch competitors do it first.

I. The Market Context: Why Now?

The Capital Surge for AI Health Startups

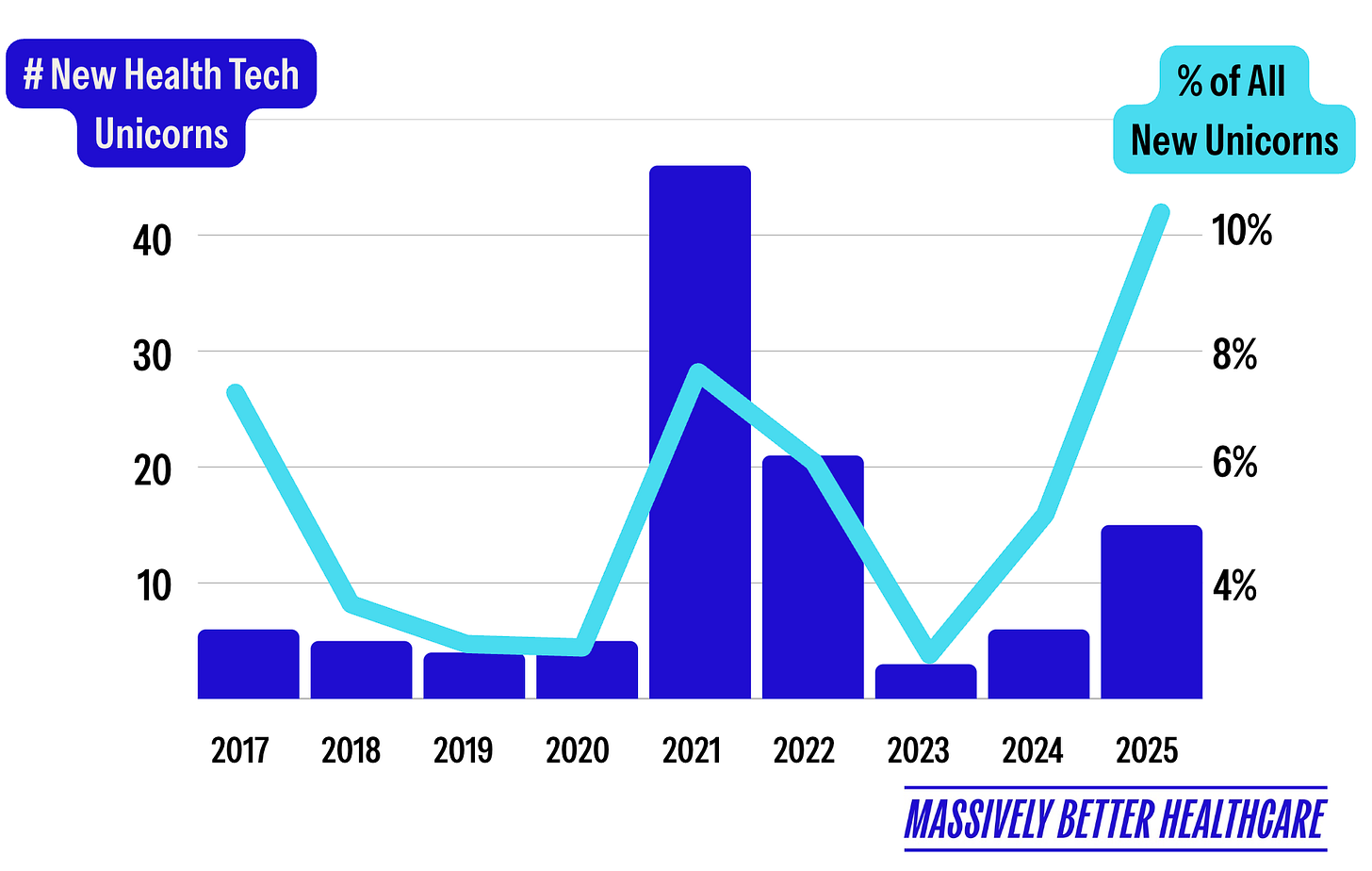

Digital health funding reached $14.2 billion in 2025—a 35% increase over 2024’s $10.5 billion and the highest since the 2022 peak.1 More importantly, 54% of that funding went to AI-enabled startups, up from 37% in 2024.1 AI startups raised 19% larger deals on average. Eight healthcare AI unicorns were created in 2025 alone with megafund participation (Andreessen Horowitz, General Catalyst, and Kleiner Perkins leading the pack) driving larger investment rounds (~80% mega deals).1,2

Source: Halle Tecco, Massively Better Healthcare, Your 2025 HealthTech Recap is Here! Substack.

Healthcare organizations are adopting AI 2.2x faster than the broader economy.2 Twenty-two percent have deployed domain-specific AI—a 7x increase from 2024, 10x from 2023.2 Healthcare AI spending hit $1.4 billion in 2025, nearly triple 2024. And 85% of that spend is flowing to startups, not incumbents.2 Mega-rounds signal market confidence: Abridge ($300M Series E at $5.3B valuation),34 Ambience ($243M Series C at $1.25B valuation),35 Function Health ($298M Series B at $2.5B valuation),25 Hippocratic AI ($126M Series C at $3.5B valuation),26 and OpenEvidence ($250M Series D at $12B valuation).27

Series B deal flow thinned dramatically in Q3 2025, with only 30 rounds recorded compared to an average of 63 in prior years, as investors increasingly concentrated capital in proven winners rather than funding companies still seeking product-market fit. This creates a demographic gap where fewer companies are graduating to maturity while the early-stage pipeline remains bloated with seed-stage bets. The market for AI agents in healthcare is projected to reach $6.92 billion by 2030—a 44.1% CAGR from $1.11 billion in 2025.9 This signals a structural shift from rule-based automation toward autonomous, intelligent AI agents embedded directly into clinical, operational, and financial workflows.

The Strategist’s Perspective

The capital surge reflects investor confidence that healthcare AI will become essential infrastructure—but the bifurcation between mega-rounds and a starved Series B market suggests a concentration of bets on perceived winners. For healthcare and pharma leaders, this means vendor due diligence must now include financial sustainability analysis. Evaluate if your AI partners have a credible path to sustainable unit economics, not just compelling technology.

The Physician Shift

The AMA’s February 2025 survey3 revealed a tipping point: 66% of physicians now use AI in their practice, up from 38% in 2023—a ~78% increase in a single year.3 Sixty-eight percent see “definite or some advantage” to AI tools. The top opportunity? Addressing administrative burden (57%).

The administrative burden is real: physicians complete an average of 39 prior authorizations weekly, spending 13 hours on the process.4 Ninety-three percent report PA delays patient care; 89% say it contributes to burnout.4 U.S. healthcare administrative spending: $740 billion annually.2,4 Eighty percent of the market remains untapped2 —AI automating these “people-intensive” workflows represents a generational opportunity.

📋 TL;DR: Healthcare AI is now infrastructure, not experiment - but governance gap is widening faster than the technology gap.

📊 Evidence: $14.2B in digital health funding (2025); 54% to AI startups; 66% of physicians now using AI in practice. 40M+ daily health queries on ChatGPT alone.

➡️ Implication: The capital flood signals that investors see healthcare AI as inevitable. First-mover advantage accrues to organizations with governance frameworks, not just technology deployments.

✅ Your Move: Stop debating whether to engage. Start building the governance frameworks and validation protocols before scaling deployments.

II. The Product Landscape: Compressed Snapshots

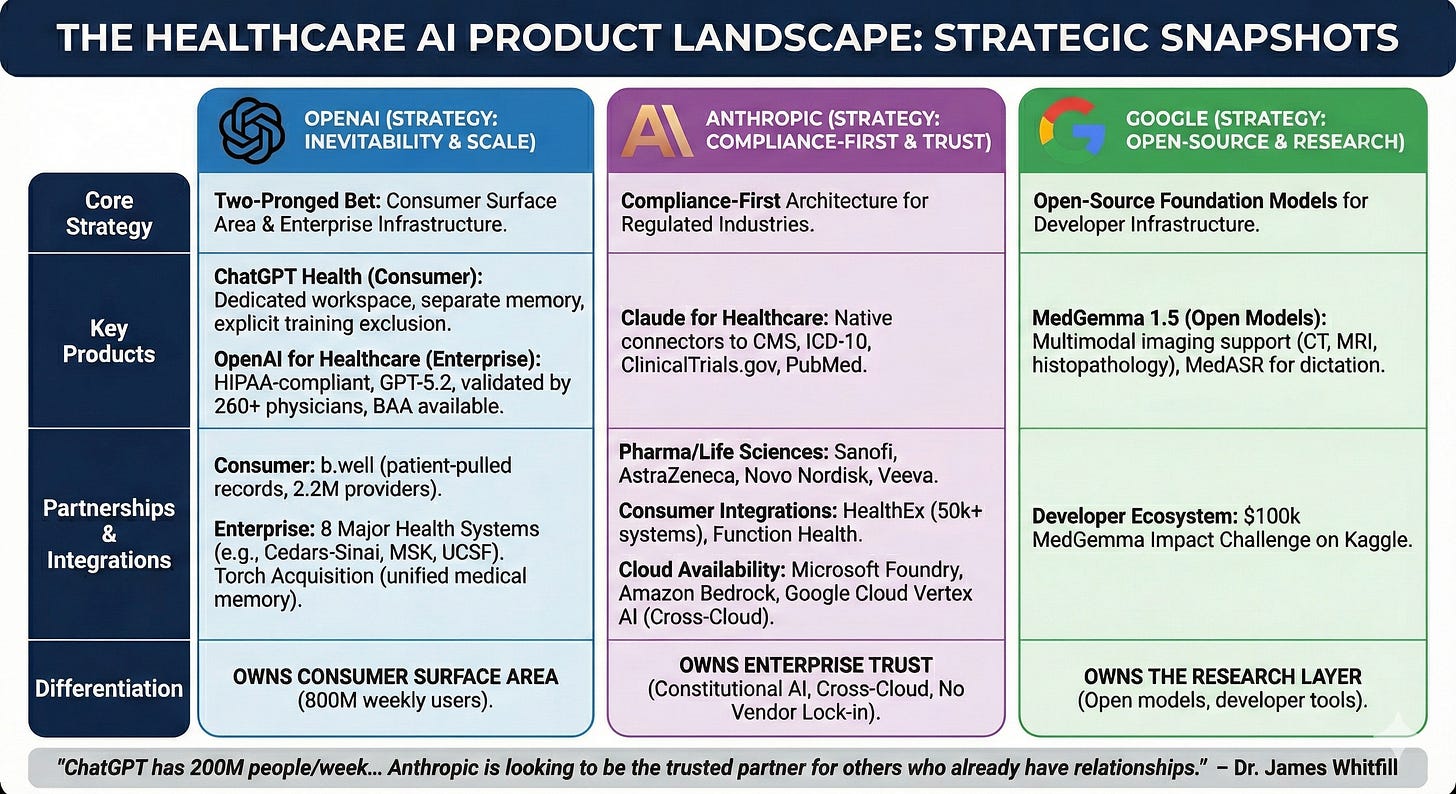

Infographic generated by the author using Nano Banana Pro (February 2026)

OpenAI’s healthcare strategy is a two-pronged bet on inevitability. ChatGPT Health (consumer) creates a dedicated health workspace with separate memory, encryption, and explicit exclusion from model training—partnering with b.well (2.2M providers, 320 health plans) to enable patient-pulled medical records.6 OpenAI for Healthcare (enterprise) offers HIPAA-compliant infrastructure with GPT-5.2 models validated by 260+ physicians, BAA availability, and deployment across eight major health systems including Cedars-Sinai, Memorial Sloan Kettering, and UCSF.36 The Torch acquisition (”unified medical memory”; reportedly $60-100 in equity) signals long-term health data infrastructure ambitions beyond the chatbot layer.28,29

Anthropic’s Claude for Healthcare is a compliance-first architecture designed for regulated industries. Native connectors to CMS coverage databases, ICD-10, ClinicalTrials.gov, and PubMed’s 35M articles speak directly to pharmaceutical R&D and payer operations.7,37 Enterprise partnerships with Sanofi, AstraZeneca, Novo Nordisk, Genmab, AbbVie, Banner Health, Stanford Healthcare, Flatiron, and Veeva establish deep life sciences credibility.38,39 Consumer integrations via HealthEx (50,000+ health systems) and Function Health provide record access.40

Claude is available through Microsoft Foundry, Amazon Bedrock, and Google Cloud Vertex AI — making it the only frontier AI model deployed across all three major cloud providers.41,42 For healthcare organizations, this cross-cloud availability eliminates vendor lock-in, enables deployment within existing compliance frameworks, and allows health systems to integrate AI into established infrastructure without rebuilding security and governance from scratch.

Google’s MedGemma represents a conspicuously different strategy: open-source foundation models rather than consumer deployment. MedGemma 1.5 (updated January 2026) offers multimodal medical imaging support for CT scans, MRI, and histopathology, alongside MedASR for clinical dictation.8 The $100,000 MedGemma Impact Challenge on Kaggle signals Google’s bet that healthcare AI adoption will be driven by developers building open infrastructure.8,43

The Strategist’s Perspective

OpenAI, Anthropic, and Google are pursuing fundamentally different strategies: consumer surface area, enterprise trust, and open-source research, respectively. None yet offers what regulated industries require—reproducible outputs, auditable decisions, and validated workflows. For healthcare organizations, this means no single vendor solves the full problem. The strategic question is not which platform to choose, but how to architect governance that remains vendor-agnostic as the market matures.

The differentiation is clear: OpenAI owns consumer surface area (800M weekly users). Anthropic owns enterprise trust (Constitutional AI, longer context windows). Google owns the research layer (open models, developer tools). As Dr. James Whitfill (HonorHealth) noted, “ChatGPT has 200 million people per week asking health questions... Anthropic does not have that user base, and so they are looking to be the trusted partner for others who already have relationships with patients.”30

III. Vendor Economics & Power Dynamics

The economics of healthcare AI reveal a structural tension that no vendor has resolved.

The Compute Cost Problem: OpenAI burned through approximately $8 billion in cash in 2025—and is now exploring advertising in ChatGPT, a revenue stream Sam Altman once described as “last resort.”45,46 Healthcare AI inference costs are substantial: each complex medical query requires multiple model calls, retrieval-augmented generation, and safety checks. At scale, the marginal cost of 40 million daily health queries is not trivial.

Anthropic faces similar pressures without OpenAI’s consumer scale to amortize costs. The enterprise play requires sales cycles, implementation support, and compliance overhead that consumer products avoid. Both companies are racing to reach sustainable unit economics before their runway shortens.

The Strategist’s Perspective

Patients accessing their own records through AI platforms will not replace clinical expertise—but it will shift the starting point of the clinical conversation. Leaders who embrace it as an opportunity will use AI to guide patients toward appropriate care pathways, meeting them where they already are.

The Data Moat Question: OpenAI explicitly states ChatGPT Health data won’t train foundation models. Anthropic makes similar commitments. But the metadata—usage patterns, query types, engagement metrics—creates product intelligence that compounds over time. The company that best understands how patients and clinicians use AI health tools will build the most effective products, regardless of model training restrictions.

The Platform Power Play: The b.well partnership gives OpenAI direct patient relationships that bypass health systems. HealthEx gives Anthropic similar reach. Both represent disintermediation strategies: if patients can access their own records and AI interpretation, what happens to the provider’s role as information gatekeeper?

📋 TL;DR: Healthcare AI economics rewards platforms that combine scale and trust. Neither OpenAI nor Anthropic has both—yet.

📊 Evidence: OpenAI is burning ~$8B and testing advertising. Anthropic prioritizes enterprise trust, however, lacks relative consumer reach. User metadata – not models - is the real moat.

➡️ Implication: The winner will be the platform that can scale economically without sacrificing trust across both consumers and enterprises.

✅ Your Move: Evaluate vendor sustainability and data governance as core due diligence; understand how the vendor plans to balance scale with trust before executing multi-year contracts.

IV. The Critical Coverage: What Skeptics Are Saying

The Bloomberg Warning:10 Parmy Olson’s Bloomberg Opinion piece identified a “fatal flaw”: “Doctor Bot will see you now. Let’s hope it doesn’t hallucinate.” She notes Google’s conspicuous absence—perhaps chastened by past failures—and warns OpenAI and Anthropic face similar risks without transparency on hallucination rates.

The Washington Post Test:11 Geoffrey Fowler analyzed a decade of Apple Watch data—29 million steps and 6 million heart rate measurements—and received sharply different assessments: an “F” from ChatGPT Health, a “C” from Claude, and no concern from his physician. Fowler concluded that the core risk is not occasional error, but the confident delivery of volatile conclusions that shift with each query.

The ECRI Ranking:12 ECRI ranked AI chatbot misuse as the #1 health technology hazard for 2026, specifically naming ChatGPT, Claude, Copilot, Gemini, and Grok. Dr. Marcus Schabacker (ECRI CEO): “Medicine is a fundamentally human endeavor. While chatbots are powerful tools, the algorithms cannot replace the expertise, education, and experience of medical professionals.”

The Privacy Chorus:13,14 Sara Geoghegan (EPIC): “ChatGPT is only bound by its own disclosures and promises, so without any meaningful limitation on that, like regulation or a law, ChatGPT can change the terms of its service at any time.” Andrew Crawford (CDT): “Especially as OpenAI moves to explore advertising as a business model, it’s crucial that separation between this sort of health data and memories... is airtight.” The 23andMe bankruptcy in March 2025 served as a wake-up call when the genetic data of 15 million customers was treated as a sellable corporate asset during liquidation proceedings. This precedent raises urgent questions for AI health platforms, where users routinely share sensitive medical details—from symptoms to mental health histories—under privacy policies that lack HIPAA protections and can be altered at any time.

V. What Expanded Studies Tell Us About AI Diagnostic Accuracy

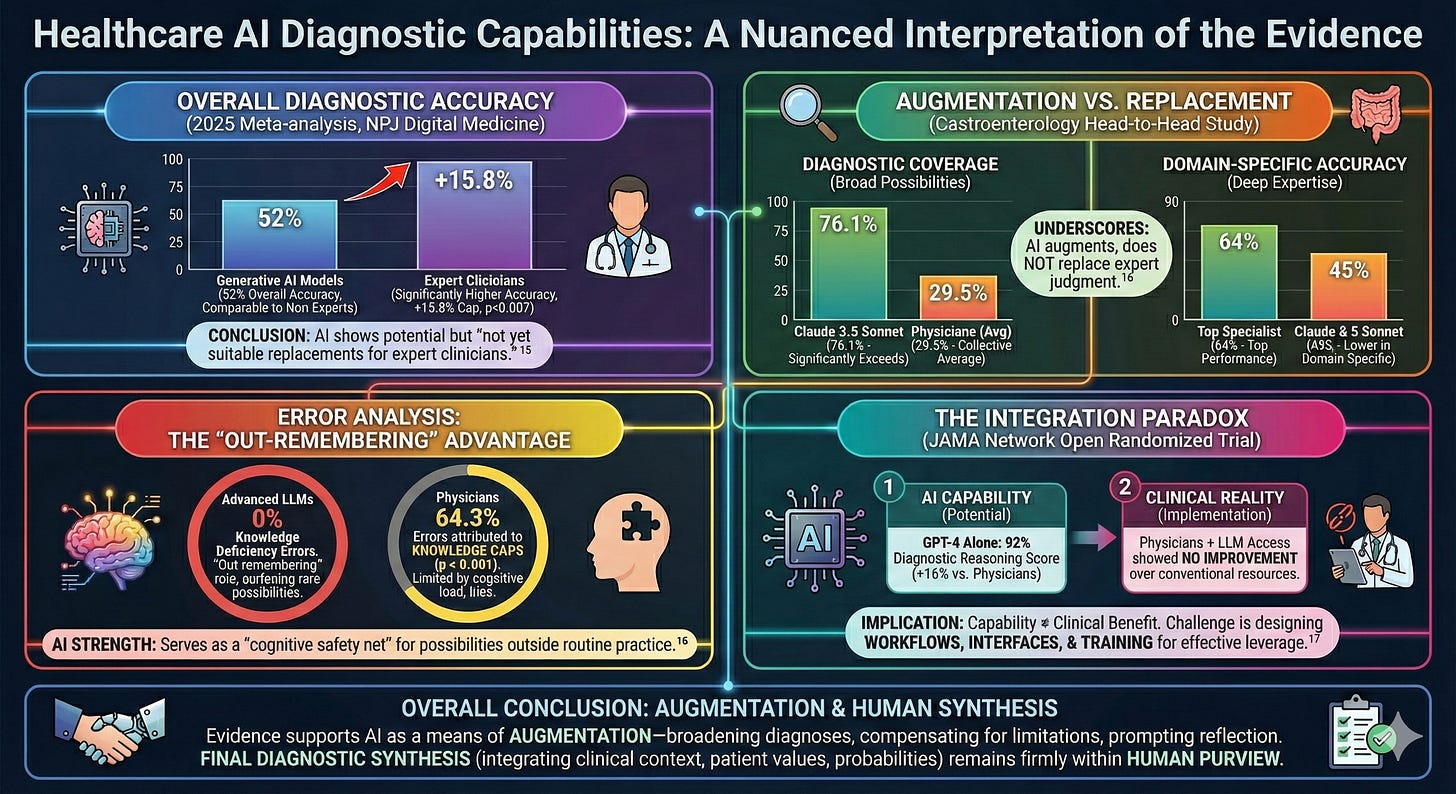

The diagnostic capabilities of healthcare AI have become a subject of considerable debate, and the evidence demands a nuanced interpretation. A 2025 meta-analysis of 83 studies published in NPJ Digital Medicine found that generative AI models achieved an overall diagnostic accuracy of 52%, performing comparably to non-expert physicians but significantly worse than expert clinicians, with an accuracy gap of 15.8 percentage points (p = 0.007).15 The authors concluded that while AI models “demonstrated potential,” they “are not yet suitable replacements for expert clinicians.”

Yet accuracy alone does not capture the full picture. A separate head-to-head study comparing seven LLMs against 22 experienced gastroenterologists (median 18.5 years of clinical experience) on 67 challenging cases found that Claude 3.5 Sonnet achieved a diagnostic coverage rate of 76.1%, significantly exceeding all participating physicians, whose collective average was 29.5%.16 However, on domain-specific GI cases, the top-performing specialist achieved 64% accuracy compared to Claude 3.5 Sonnet’s 45%—underscoring that AI augments rather than replaces expert judgment.

The Strategist’s Perspective

The evidence on AI diagnostic accuracy reveals a consistent pattern: LLMs are not outthinking clinicians — they are out-remembering them. This distinction matters strategically. AI excels at surfacing possibilities that fall outside routine practice; clinicians excel at synthesizing context, values, and probabilities. Organizations that deploy AI as a cognitive safety net—rather than a clinical replacement—will extract value while managing risk.

Error analysis from the same study offers insight into where AI adds value: advanced LLMs exhibited zero knowledge deficiency errors, compared to 64.3% of physician errors attributed to knowledge gaps (p < 0.001).16 This finding suggests that LLMs are not outthinking clinicians—they are out-remembering them. Their strength lies in serving as a cognitive safety net, surfacing diagnostic possibilities that fall outside a specialist’s training or routine practice, might be limited by anchoring bias or knowledge gaps, particularly in cases involving rare conditions or atypical presentations.

However, capability does not automatically translate to clinical benefit. A randomized trial published in JAMA Network Open found that GPT-4 alone achieved a 92% diagnostic reasoning score, outperforming both physician groups by 16 percentage points—yet physicians given access to the LLM showed no improvement over those using conventional resources.17 This integration paradox suggests the challenge ahead is not building better AI, but designing workflows, interfaces, and training paradigms that enable clinicians to leverage these tools effectively.

For now, the evidence supports AI as a means of augmentation—broadening differential diagnoses, compensating for knowledge limitations, and prompting clinical reflection—while final diagnostic synthesis, that requires an integration of clinical context, patient values, and diagnostic probabilities, remains firmly within human purview.

Infographic generated by the author using Nano Banana Pro (February 2026)

📋 TL;DR: Healthcare AI shows genuine diagnostic potential, but capability does not equal clinical benefit without proper integration.

📊 Evidence: AI diagnostic accuracy matches non-expert physicians but trails experts by 15.8 percentage points.15 LLMs exhibit zero knowledge deficiency errors versus 64.3% for physicians.16 Yet physicians given LLM access showed no performance improvement over conventional resources.17

➡️ Implication: The challenge is not building better AI but designing workflows that enable clinicians to leverage it effectively.

✅ Your Move: Invest in integration design and clinician training—not just AI procurement.

VI. Validation Remains a Challenge with Healthcare AI Platforms

Health AI faces a validation vacuum that traditional medical technology never encountered.

The Strategist’s Perspective

Without established external standards, organizations must build their own validation infrastructure—model versioning, acceptance criteria, and reproducibility testing. Regulatory clarity will come. Organizations that invest in validation infrastructure now will be better prepared to meet it.

The Stochastic Problem: LLMs are fundamentally non-deterministic. The same query can produce different outputs across runs—the Washington Post test demonstrated this vividly when Fowler’s cardiac grades swung from F to B on identical data.11 Traditional medical device validation assumes reproducibility; LLM validation cannot. This creates audit nightmares: How does one validate a system that gives different answers each time?

The Version Control Problem: OpenAI and Anthropic continuously update their models. A clinical workflow validated on GPT-4 may behave differently on GPT-5.2. Neither vendor provides sufficient model versioning guarantees for GxP environments. Pharmaceutical companies require audit trails that demonstrate consistent, validated behavior over time—consumer AI platforms offer no such guarantees.

The Benchmark Gap: OpenAI touts HealthBench validation with 260+ physicians.47 But HealthBench is OpenAI’s own benchmark—not an independent standard. No consensus exists on what “good enough” looks like for healthcare AI. Until independent, consensus-driven benchmarks emerge—analogous to clinical trial endpoints—healthcare AI validation will remain ad hoc, vendor-driven, and unreliable.

📋 TL;DR: At present, there is no validated and reproducible way to determine if a given healthcare AI platform is clinically fit for purpose.

📊 Evidence: LLM outputs change with each query (stochastic) — same question, different answer. Models update continuously without versioning. Benchmarks used for verification are typically by the vendor selling the product.

➡️ Implication: Organizations deploying healthcare AI are accepting validation risk that traditional medical technology would never tolerate.

✅ Your Move: Require model version guarantees, establish internal validation protocols, and document acceptance criteria before deployment. Vendor benchmarks should not be treated as evidence of clinical readiness.

VII. The Regulatory Landscape: What Exists, What’s Missing, and What’s Coming

FDA’s January 2026 CES Announcements: At CES in January 2026, FDA Commissioner Marty Makary announced the agency would move “at Silicon Valley speed,” expanding enforcement discretion for clinical decision support and broadening wellness exemptions for non-invasive wearables measuring blood pressure, glucose, and other physiological metrics.18,19,20

The Oversight Vacuum: Consumer AI health tools that avoid diagnostic claims face limited FDA oversight.19,20 Neither ChatGPT Health nor Claude for Healthcare carries classification for Software as a Medical Device (SaMD), Clinical Decision Support (CDS) software, or FDA premarket review. Both display prominent disclaimers: “not intended for diagnosis or treatment.” Yet what’s critical is intended use and functional impact of these healthcare AI platforms. When 40 million people daily use these tools to interpret symptoms, assess lab results, or decide whether to seek care,5 the disclaimer doesn’t change the tool’s functional role in health decisions. It simply shifts liability from the vendor to the patient.

The Strategist’s Perspective

The pivot toward limited regulatory oversight for these Healthcare AI products is a double-edged sword for Pharma. While it accelerates the deployment of wellness and support tools, it removes the regulatory ‘shield’ that traditional 510(k) clearances provides. Without a formal FDA stamp, the burden of clinical validation shifts entirely to internal Quality, Regulatory, and Compliance teams. If you are building on these platforms, you are now your own regulator.

What HIPAA Does NOT Cover: Once patients exercise their 21st Century Cures Act data-sharing rights, HIPAA protections evaporate.13,21 OpenAI and Anthropic are not covered entities under federal health privacy law. Both OpenAI and Anthropic offer Business Associate Agreements (BAA) for their enterprise products, however, BAAs are voluntary and consumer products operate outside these frameworks.

The Transparency Problem: While OpenAI cites collaboration with 260+ physicians, other critical details remain opaque. No published peer-reviewed validation studies. No patient involvement in design. No external safety monitoring mechanisms. No clear adverse event reporting pathway. No transparency on training data sources.

State-level AI healthcare regulation is active but fragmented. In 2025, 47 states introduced 250+ bills, with 33 signed into law across 21 states.22 California’s AB 489 prohibits AI from implying it is a licensed healthcare professional.23 Illinois bars AI from making independent therapeutic decisions without clinician oversight.23 Texas requires disclosure of AI use in clinical care and oversight of AI-generated medical records. 23 Utah has taken a permissive stance, launching a first-in-nation pilot in January 2026 that allows AI to autonomously renew prescriptions for 190 common medications without real-time clinician involvement.48 Other states are reportedly considering similar programs—creating a patchwork of rules that complicate compliance for organizations operating across multiple jurisdictions.

The EU AI Act’s high-risk requirements take effect August 2, 2026.44 Healthcare AI used for insurance risk assessment, eligibility evaluation, or emergency triage requires conformity assessments and CE marking.44 Both OpenAI and Anthropic signed the EU’s General-Purpose AI Code of Practice.33 This addresses transparency and safety requirements for foundation models but does not automatically satisfy high-risk obligations, which apply based on deployment context.

Infographic generated by the author using Nano Banana Pro (February 2026)

📋 TL;DR: Consumer AI health tools face an evolving regulatory landscape where regulatory oversight remains limited and state-level rules vary widely.

📊 Evidence: Forty million daily health queries occur on platforms outside traditional FDA classification.5 HIPAA does not apply to consumer AI products. Twenty-one states have enacted AI healthcare laws,22 while the EU AI Act high-risk requirements take effect August 2, 2026.44

➡️ Implication: Organizations deploying healthcare AI are accepting validation risk that traditional medical technology would never tolerate.

✅ Your Move: Require model version guarantees, establish internal validation protocols, and document acceptance criteria before deployment. Vendor benchmarks should not be treated as evidence of clinical readiness.

The Bottom Line: Consumer AI health tools operate in a governance vacuum—no HIPAA coverage, no FDA premarket review, state laws under federal challenge. The absence of external regulation doesn’t eliminate organizational risk; it transfers responsibility to deploying institutions. Healthcare organizations should establish internal governance, implement human oversight, and document clinical validation before deployment.

VIII. Strategic Recommendations by Stakeholder

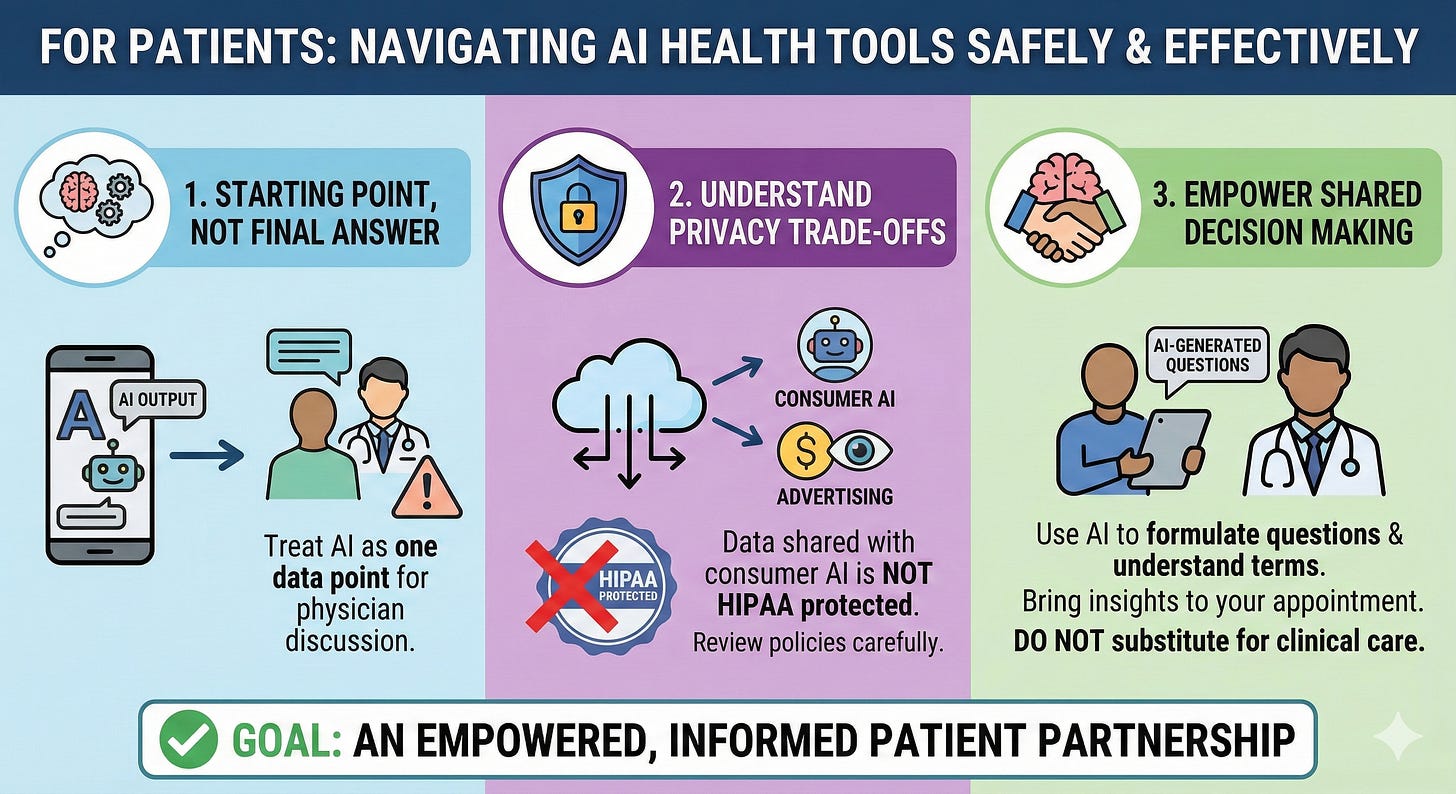

For Patients

• Use AI health tools as a starting point, not a final answer. Treat AI outputs as one data point to discuss with your physician.

• Understand the privacy trade-offs. Once you share health data with consumer AI, HIPAA no longer protects it. Review privacy policies carefully — especially as vendors explore advertising models.

• AI could be the real force multiplier in shared decision making with the physician and empowering patients. AI can help formulate questions and understand medical terminology. Bring your AI-generated questions to the appointment—don’t substitute AI consultation for clinical care.

Infographic generated by the author using Nano Banana Pro (February 2026)

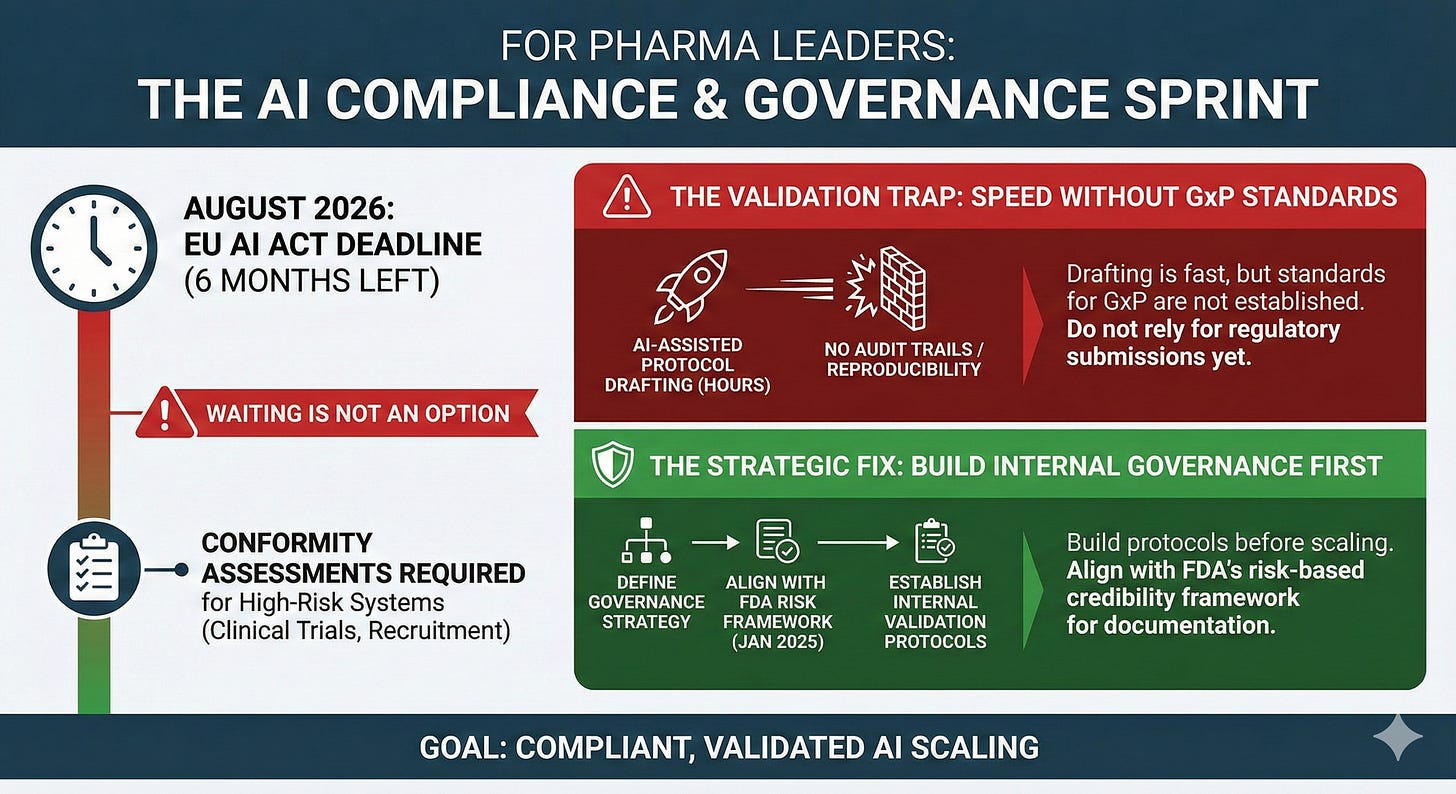

For Pharma Leaders

• Get ahead of EU AI Act compliance. August 2026 is six months away. High-risk AI systems used in clinical trials, patient recruitment, and regulatory submissions require conformity assessments. Waiting is not an option.

• Establish validation protocols for AI-assisted regulatory work before scaling. AI-assisted protocol drafting in hours instead of days is compelling—but audit trails and reproducibility standards for GxP environments are not established yet. Build internal validation protocols before relying on AI for regulatory submissions.

• Define your AI model governance strategy. The FDA’s January 2025 draft guidance introduced a risk-based credibility framework for AI in drug development, with documentation requirements scaling from minimal for hypothesis-generating tools to full transparency and prospective validation for high-risk clinical applications. Align your internal governance based on this framework.

Infographic generated by the author using Nano Banana Pro (February 2026)

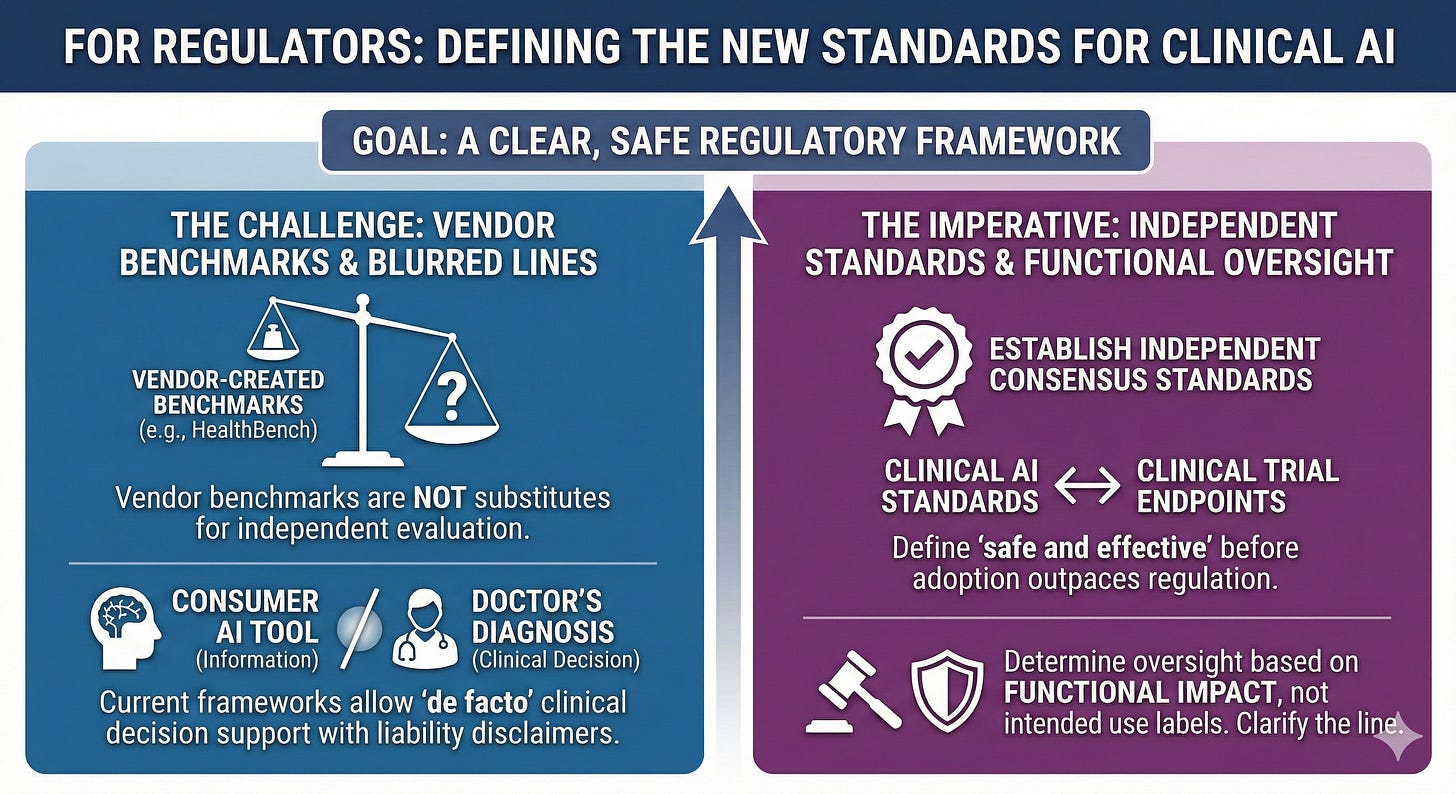

For Regulators

• Require independent validation standards. Vendor-created benchmarks like HealthBench are not substitutes for independent evaluation. Regulators should establish consensus performance standards for clinical AI—analogous to clinical trial endpoints—before market adoption outpaces the ability to define what “safe and effective” means.

• Clarify the line between information and diagnosis. Current frameworks allow consumer AI tools to function as de facto clinical decision support while disclaimers shift liability to patients. Regulators must decide whether functional impact—not intended use labels—should determine oversight requirements.

Infographic generated by the author using Nano Banana Pro (February 2026)

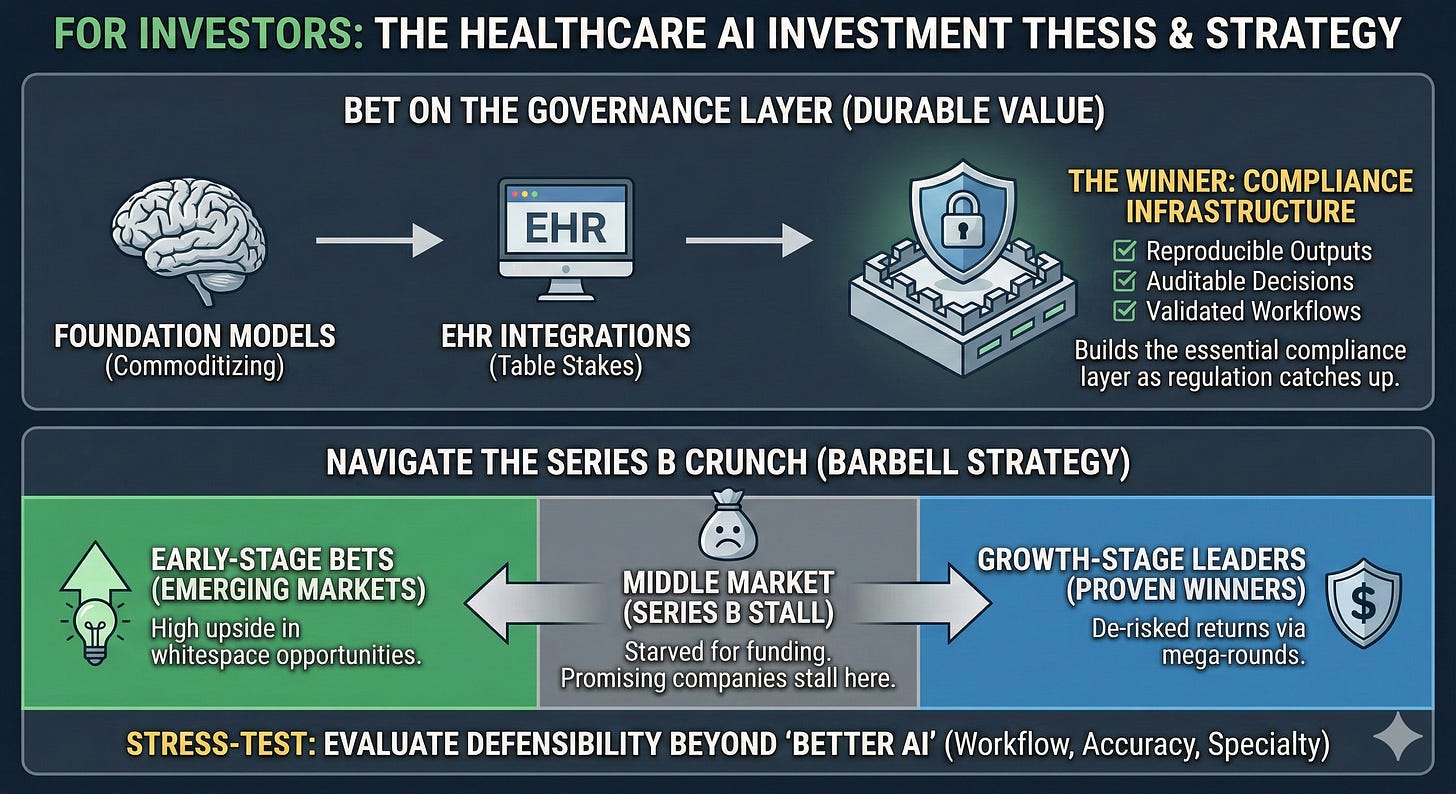

For Investors

• Bet on the governance layer. Foundation models are commoditizing. EHR integrations are table stakes. The startup that builds reproducible outputs, auditable decisions, and validated workflows—the compliance infrastructure for healthcare AI—will capture durable value as regulation catches up to adoption.

• Stress-test portfolio companies against incumbent pricing. Epic is rumored to price its AI scribe at ~$80/provider/month—a fraction of the $100–$800 that standalone ambient AI startups typically charge.24 If confirmed, this pricing pressure will force startups to compete on more than model quality. Evaluate defensibility based on workflow integration, specialty coverage, and higher accuracy — because “better AI” alone won’t sustain margins when incumbents bundle good-enough AI with commodity prices.

• Navigate the Series B crunch deliberately. Capital is bifurcating: mega-rounds are flowing to proven winners while early-stage bets emerging markets (whitespace opportunities). The middle market—companies past seed but not yet breakout—is starved for funding. Position at the extremes: early-stage for high upside, growth-stage for de-risked returns. Series B is where promising companies stall.

Infographic generated by the author using Nano Banana Pro (February 2026)

THE CONTRARIAN SYNTHESIS

Why Both the Bulls and the Bears Are Missing the Point

OpenAI owns consumer surface area but lacks enterprise trust. Anthropic owns enterprise trust but lacks consumer scale. Google has research (MedGemma) but not consumer deployment.

All of them lack what healthcare actually requires: reproducible outputs, auditable decisions, and validated workflows.

The startup that builds the “governance layer”—not the foundation model, not the EHR integration, but the validation infrastructure—will capture the sustainable value that current players cannot.

Health and pharma organizations that wait for regulatory clarity will cede competitive position. Organizations that deploy without governance will accumulate liability. The winning card: build internal governance frameworks now, deploy in controlled “AI safe zones,” and scale only with validation evidence.

IX. The Stakes

Healthcare AI has crossed from experiment to infrastructure. The question is no longer capability but accountability: who governs, who validates, and who bears the risk when confident algorithms meet clinical reality. Capital is flowing to companies that can demonstrate traction; regulation is lagging behind adoption; and the governance gap is widening faster than the technology gap.

For pharma leaders, the value proposition is clear: compress protocol design from weeks to hours, unlock evidence from fragmented real-world data, and accelerate time-to-market—but the window to build compliant AI infrastructure before August 2026 is closing fast. For healthcare leaders, the calculus is similar: build governance frameworks now, or inherit liability later. Patients are already using AI to interpret their symptoms, question their diagnoses, and arrive at appointments with expectations shaped by algorithms. The organizations that thrive will be those prepared to meet them.

The AI-augmented patient is already here. The question is whether you’re ready to meet them.

———

If this analysis was valuable, please share it with colleagues navigating healthcare AI decisions. Subscribe to The Health Innovation Insider for analysis at the intersection of AI, emerging tech, and healthcare leadership—depth, not hype.

Kausar Riaz Ahmed, PhD, MBA, RAC is a pharma AI executive and former FDA regulatory strategist. She writes The Health Innovation Insider—exploring AI and emerging tech transforming healthcare, and the leadership driving innovation. Follow on LinkedIn for real-time commentary.

AI Disclosure and Editorial Methodology

I use AI the way I once used research teams: to accelerate the work, not replace the thinking. AI tools supported research and early drafts of this analysis. The strategic framing, synthesis, editorial decisions, and conclusions are my own — shaped by years of work at the intersection of pharma, innovation, and regulatory science. All data has been independently verified. I take full ownership of the final work.

References

1. Rock Health. 2025 year-end digital health funding overview: A tale of two markets; January 2026. Accessed February 14, 2026. https://rockhealth.com/insights/2025-year-end-digital-health-funding-overview-a-tale-of-two-markets/

2. Menlo Ventures. The State of AI in Healthcare 2025. Menlo Ventures; October 2025. Accessed February 14, 2026. https://menlovc.com/perspective/2025-the-state-of-ai-in-healthcare/

3. American Medical Association. AMA augmented intelligence research: physician sentiments around the use of AI in healthcare: motivations, opportunities, risks, and use cases. February 2025. Accessed February 14, 2026. http://ama-assn.org/system/files/physician-ai-sentiment-report.pdf

4. American Medical Association. 2025 AMA Prior Authorization Physician Survey. February 2025. Accessed February 2026. https://www.ama-assn.org/system/files/prior-authorization-survey.pdf

5. OpenAI. AI as a Healthcare Ally: Understanding How People Are Using ChatGPT for Health. OpenAI; January 2026. Accessed February 14, 2026. https://cdn.openai.com/pdf/2cb29276-68cd-4ec6-a5f4-c01c5e7a36e9/OpenAI-AI-as-a-Healthcare-Ally-Jan-2026.pdf

6. OpenAI. Introducing ChatGPT Health. OpenAI Blog. January 7, 2026. Accessed February 14, 2026. https://openai.com/index/introducing-chatgpt-health/

7. Anthropic. Advancing Claude in healthcare and the life sciences. Anthropic News. January 11, 2026. Accessed February 14, 2026. https://www.anthropic.com/news/healthcare-life-sciences

8. Golden D and Mahvar F. Next generation medical image interpretation with MedGemma 1.5 and medical speech to text with MedASR. Google Research Blog. January 13, 2026. Accessed February 14, 2026. https://research.google/blog/next-generation-medical-image-interpretation-with-medgemma-15-and-medical-speech-to-text-with-medasr/

9. MarketsandMarkets. AI Agents in Healthcare Market Set to Surge to USD 6.92 Billion by 2030. GlobeNewswire; January 26, 2026. Accessed February 14, 2026. https://www.globenewswire.com/news-release/2026/01/26/3225727/0/en/AI-Agents-in-Healthcare-Market-Set-to-Surge-to-USD-6-92-Billion-by-2030-MarketsandMarkets.html

10. Olson P. ChatGPT’s AI-Healthcare push has a fatal flaw. Bloomberg Opinion. January 15, 2026. Accessed February 14, 2026. ChatGPT, Anthropic, Google: AI Healthcare Push Has a Fatal Flaw - Bloomberg

11. Fowler G. I let ChatGPT analyze a decade of my Apple watch data. Then I called my doctor. Washington Post. January 26, 2026. Accessed February 14, 2026. ChatGPT can analyze Apple Watch health data. Here’s how a doctor views it. - The Washington Post

12. ECRI. Top 10 Health Technology Hazards for 2026 Executive Brief. ECRI/PRNewswire; January 21, 2026. Accessed February 14, 2026. Top 10 Health Technology Hazards for 2026 Executive Brief

13. Geoghegan S. Quoted in: Smalley S. ChatGPT Health feature draws concern from privacy critics over sensitive medical data. The Record. January 8, 2026. Accessed February 14, 2026. ChatGPT Health feature draws concern from privacy critics over sensitive medical data | The Record from Recorded Future News

14. Crawford A. Quoted in: Smalley S. ChatGPT Health feature draws concern from privacy critics over sensitive medical data. The Record. January 8, 2026. Accessed February 14, 2026. https://therecord.media/chatgpt-health-draws-concern-privacy-critics

15. Takita H, Kabata D, Walston SL, et al. A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians. npj Digit Med. 2025;8:175. doi:10.1038/s41746-025-01543-z

16. Yang X, Li T, Wang H, et al. Multiple large language models versus experienced physicians in diagnosing challenging cases with gastrointestinal symptoms. npj Digit Med. 2025;8:85. doi:10.1038/s41746-025-01486-5

17. Goh E, Gallo R, Hom J, et al. Large language model influence on diagnostic reasoning: a randomized clinical trial. JAMA Netw Open. 2024;7(10):e2440969. doi:10.1001/jamanetworkopen.2024.40969

18. Lawrence L, Aguilar M, Palmer K, Trang B. FDA announces sweeping changes to oversight of wearables, AI-enabled devices. STAT News. January 6, 2026. Accessed February 14, 2026. https://www.statnews.com/2026/01/06/fda-pulls-back-oversight-ai-enabled-devices-wearables/

19. U.S. FDA. Clinical decision support software: guidance for industry and Food and Drug Administration staff. January 6, 2026. Accessed February 14, 2026. https://www.fda.gov/regulatory-information/search-fda-guidance-documents/clinical-decision-support-software

20. U.S. FDA. general wellness: policy for low risk devices: guidance for industry and Food and Drug Administration staff. January 6, 2026. Accessed February 14, 2026. https://www.fda.gov/regulatory-information/search-fda-guidance-documents/general-wellness-policy-low-risk-devices

21. Johnson D. Your AI doctor doesn’t have to follow the same privacy rules as your real one. Cyberscoop. February 11, 2026. Accessed February 14, 2026. https://cyberscoop.com/ai-healthcare-apps-hipaa-privacy-risks-openai-anthropic/

22. Seiger R, Augenstein J, Shashoua M, et al. Manatt Health: Health AI policy tracker. December 2025. Accessed February 14, 2026. https://www.manatt.com/insights/newsletters/health-highlights/manatt-health-health-ai-policy-tracker

23. Yoo J, Razmazma A, Ratican SH, et al. The New Regulatory Reality for AI in Healthcare: How Certain States Are Reshaping Compliance. Fenwick. September 29, 2025. Accessed February 14, 2026. https://www.fenwick.com/insights/publications/the-new-regulatory-reality-for-ai-in-healthcare-how-certain-states-are-reshaping-compliance

24. OrbDoc. Best AI medical scribes 2026: price & feature comparison. OrbDoc. Updated November 2025. Accessed February 14, 2026. https://orbdoc.com/compare/ai-medical-scribe-comparison-2025

25. Park K. Function Health raises $298M Series B at $2.5B valuation. TechCrunch. November 19, 2025. Accessed February 14, 2026. https://techcrunch.com/2025/11/19/function-health-closes-298m-series-b-at-a-2-5b-valuation-launches-medical-intelligence/

26. Hippocratic AI. Hippocratic AI raises $126 million in Series C at $3.5 billion valuation led by Avenir Growth to expand clinically safe generative AI agents across healthcare. Palo Alto, CA: Business Wire; November 3, 2025. Accessed February 14, 2026. https://www.businesswire.com/news/home/20251103432446/en/Hippocratic-AI-Raises-126-Million-in-Series-C-at-3.5-Billion-Valuation-Led-by-Avenir-Growth-to-Expand-Clinically-Safe-Generative-AI-Agents-Across-Healthcare

27. Rooney K. OpenEvidence, the ‘ChatGPT for doctors,’ doubles valuation to $12 billion. CNBC. January 21, 2026. Accessed February 14, 2026. https://www.cnbc.com/2026/01/21/openevidence-chatgpt-for-doctors-doubles-valuation-to-12-billion.html

28. Capoot A. OpenAI acquires health-care technology startup Torch for $60 million, source says. CNBC. January 12, 2026. Accessed February 14, 2026. https://www.cnbc.com/2026/01/12/open-ai-torch-health-care-technology.html

29. Bort J. OpenAI buys tiny health records startup Torch for, reportedly, $100M. TechCrunch. January 12, 2026. Accessed February 14, 2026. https://techcrunch.com/2026/01/12/openai-buys-tiny-health-records-startup-torch-for-reportedly-100m/

30. Whitfill J. Quoted in: Health system perspectives on AI launches. Becker’s Hospital Review. January 2026. Accessed February 14, 2026.

31. Fox A. ChatGPT for Healthcare, Claude AI pose governance challenges. Healthcare IT News. January 2026. Accessed February 14, 2026. ChatGPT for Healthcare, Claude AI pose governance challenges | Healthcare IT News

32. de la Zerda A. Quoted in: The liability gap in healthcare AI. Healthcare IT News. January 2026. Accessed February 14, 2026.

33. European Commission. The General-Purpose AI Code of Practice. Accessed February 16, 2026. https://digital-strategy.ec.europa.eu/en/policies/contents-code-gpai

34. Temkin M. In just 4 months, AI medical scribe Abridge doubles valuation to $5.3B. TechCrunch. June 24, 2025. Accessed February 14, 2026. https://techcrunch.com/2025/06/24/in-just-4-months-ai-medical-scribe-abridge-doubles-valuation-to-5-3b/

35. Landi H. Ambience reels in $243M series C as investors continue to bet big on ambient AI. Fierce Healthcare. July 29, 2025. Accessed February 2026. https://www.fiercehealthcare.com/health-tech/ambience-banks-243m-series-c-investors-continue-bet-big-ambient-ai

36. OpenAI, Introducing OpenAI for Healthcare. January 8, 2026. Accessed February 10, 2026. https://openai.com/index/openai-for-healthcare/

37. Hagen J. JPM: Anthropic launches Claude for Healthcare. MobiHealthNews. January 12, 2026. Accessed February 14, 2026. https://www.mobihealthnews.com/news/jpm-anthropic-launches-claude-healthcare

38.Kahn J. Anthropic unveils Claude for Healthcare, expands life science features, and partners with HealthEx to let users connect medical records. Fortune. January 11, 2026. Accessed February 14, 2026. https://fortune.com/2026/01/11/anthropic-unveils-claude-for-healthcare-and-expands-life-science-features-partners-with-healthex-to-let-users-connect-medical-records/

39. Landi H. JPM26: Anthropic launches Claude for Healthcare to turbocharge AI efficiency at health systems, payers. FierceHealthcare. January 11, 2026. Accessed February 14, 2026. https://www.fiercehealthcare.com/ai-and-machine-learning/jpm26-anthropic-launches-claude-healthcare-targeting-health-systems-payers

40. HealthEx. HealthEx partners with Anthropic to turn patients’ scattered medical records into actionable health insights. GlobeNewswire. January 11, 2026. Accessed February 14, 2026. https://www.globenewswire.com/news-release/2026/01/11/3216498/0/en/HealthEx-Partners-with-Anthropic-to-Turn-Patients-Scattered-Medical-Records-into-Actionable-Health-Insights.html

41. Sweetman S. Bridging the gap between AI and medicine: Claude in Microsoft Foundry advances capabilities for healthcare and life sciences customers. Microsoft Industry Blogs. January 11, 2026. Accessed February 14, 2026. https://www.microsoft.com/en-us/industry/blog/healthcare/2026/01/11/bridging-the-gap-between-ai-and-medicine-claude-in-microsoft-foundry-advances-capabilities-for-healthcare-and-life-sciences-customers/

42. Martindale J. Anthropic signs $30 billion deal with Amazon to deploy Claude on AWS — Nvidia and Microsoft jointly invest $15 billion into AI firm as it becomes first provider across Azure, AWS and Google. Tom’s Hardware. November 19, 2025. Accessed February 14, 2026. https://www.tomshardware.com/tech-industry/artificial-intelligence/anthropic-signs-usd30-billion-deal-with-amazon-to-deploy-claude-on-aws-nvidia-and-microsoft-jointly-invest-usd15-billion-into-ai-firm-as-it-becomes-first-provider-across-azure-aws-and-google

43. Kaggle. The MedGemma Impact Challenge. Kaggle Competitions. Accessed February 14, 2026. https://www.kaggle.com/competitions/med-gemma-impact-challenge

44. Orrick. High-Risk AI – EU AI Act Guide. Accessed February 16, 2026. https://ai-law-center.orrick.com/eu-ai-act/high-risk-ai/

45. Smith D. OpenAI says it plans to report stunning annual losses through 2028—and then turn wildly profitable just two years later. Fortune. November 12, 2025. Accessed February 2026. https://fortune.com/2025/11/12/openai-cash-burn-rate-annual-losses-2028-profitable-2030-financial-documents/

46. Parmy O. Sam Altman’s ‘Last Resort’ for ChatGPT Looks a Lot Like Facebook. Bloomberg Opinion. January 20, 2026. Accessed February 14, 2026. https://www.bloomberg.com/opinion/articles/2026-01-20/chatgpt-and-openai-are-starting-to-look-a-lot-like-facebook-and-meta

47. OpenAI. Introducing HealthBench. OpenAI Blog. May 2025. Accessed February 14, 2026. https://openai.com/index/healthbench/

48. Alberty E. Utah permits nation’s first AI drug prescriptions. Axious Salt Lake City. January 7, 2026. Accessed February 14, 2026. https://www.axios.com/local/salt-lake-city/2026/01/07/utah-ai-drug-prescriptions-doctronic

#HealthcareAI #DigitalHealth #Pharma #AIGovernance #HealthTech #EUAIAct #FDARegulation #MedTech