The Trust Deficit: Why Healthcare’s AI Revolution Is Stalling at the Last Mile

A Strategic Quadrant Analysis of the Healthcare AI Trust-Adoption Matrix Part 1 of a 2-part series • Companion deep dive (to the article on LinkedIn) for Substack subscribers

The TRICORDER trial — published in The Lancet in January 2026 (Kelshiker et al., 2026) - crystallized a documented truth that those of us in Health AI have long observed:

The bottleneck to real world clinical impact is not algorithmic performance or technological capability. It is trust.

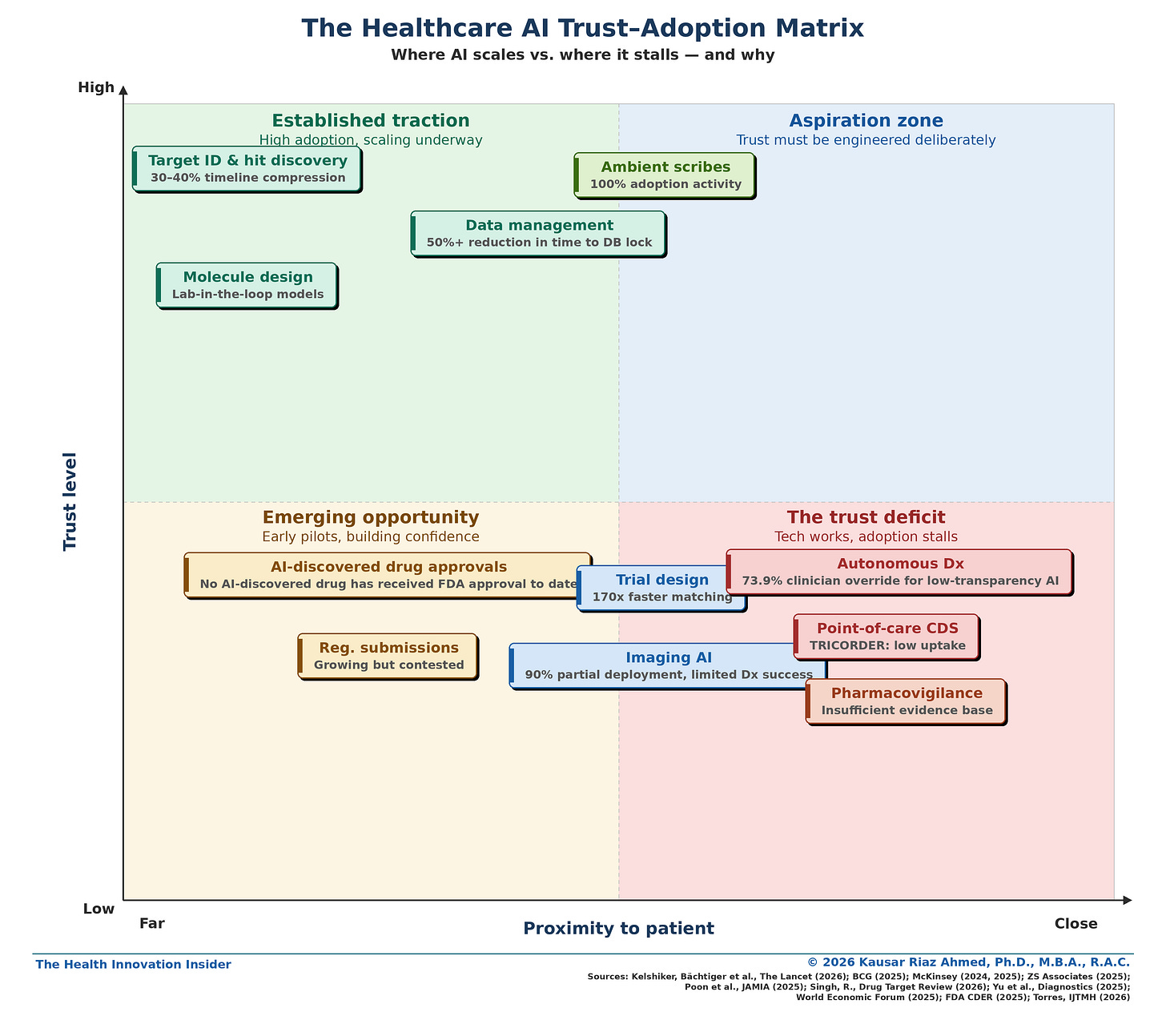

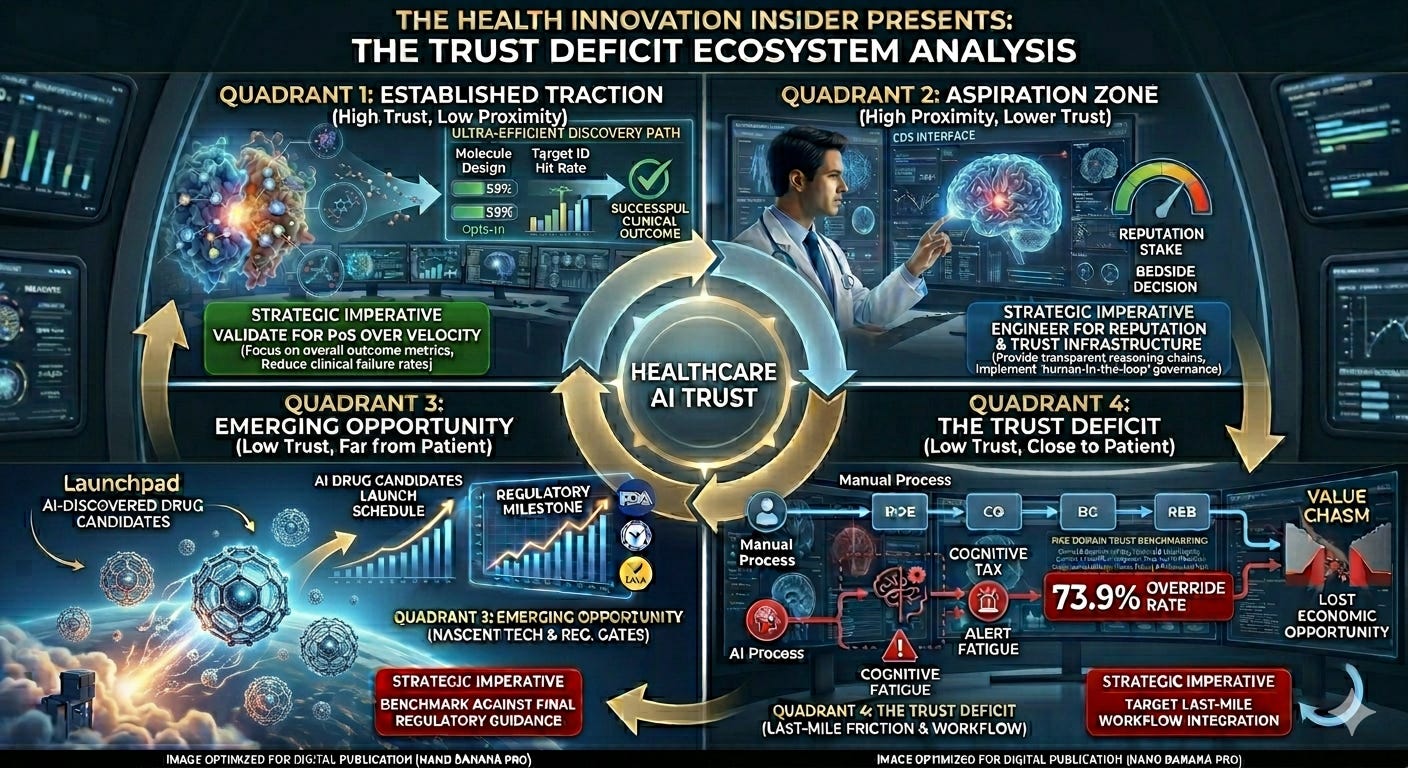

In my companion LinkedIn article, I introduced the Healthcare AI Trust–Adoption Matrix — a framework mapping AI use cases by trust level and proximity to the patient.

The gradient was clear: trust decreases as AI moves closer to clinical decision-making.

But diagnosing the problem is the easy part. This analysis goes deeper — examining each quadrant through four lenses: where AI has delivered value, where trust gaps remain, why it matters for the benchmarking framework, and what would actually move each use case up the matrix.

This article — Part 1 of a two-part series — maps where trust has been established versus where it remains elusive across the healthcare value chain. Part 2 will introduce a six-domain benchmarking framework with operational metrics and KPIs.

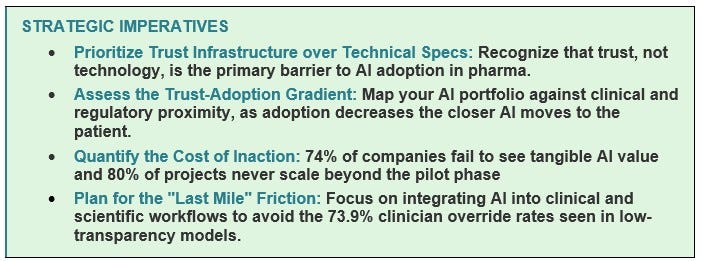

Before we dive in, one framing point. The trust deficit isn’t free. BCG estimates that 74% of companies see no tangible value from AI investments (BCG, 2025a). McKinsey found that 70% of digital transformations fail due to change management (McKinsey & Company, 2024). Clinical trial delays of even six months can cost hundreds of millions in lost revenue (Pharmaphorum, 2025). And 80% of healthcare AI projects fail to scale beyond the pilot phase (Chatni, 2026). Every month an AI tool sits unused is value destroyed — for shareholders, for patients, and for the scientists who built it.

The Trust–Adoption Spectrum Across the Healthcare AI Value Chain

When I mapped AI adoption across the pharma value chain against trust levels and proximity to the patient, a clear gradient emerged.

Quadrant 1: Established Traction (High Trust, Far from Patient)

AbbVie’s Howard Jacob once described using the company’s ARCH platform to identify two potential drugs for a rare disease patient in just four hours (Bio.IT World, 2026). That’s the promise of this quadrant — and it’s being delivered.

Where AI has demonstrably delivered value

In target identification, AI has compressed discovery timelines by 30–40%, reducing preclinical development from 3–4 years to 13–18 months (Singh, 2026). Genentech’s “lab-in-the-loop” model embeds AI directly into experimental workflows to guide molecular design in real time (McKinsey & Company, 2025a). In data management, AI delivers a 50%+ reduction in time to database lock and 70% fewer manual queries (McKinsey & Company, 2024). Benchling’s 2026 biotech AI report found that literature review (76% adoption), protein structure prediction (71%), and scientific reporting (66%) are the breakthrough use cases — succeeding because of clean, verifiable data that fits naturally into daily scientific workflows (Benchling, 2026).

Where the trust gap remains

None of these gains have translated into an FDA-approved drug. AI-discovered compounds show progression rates similar to traditionally discovered compounds (Singh, 2026). And 68% of pharma CDIOs identify poor data quality as the primary reason AI fails (ZS Associates, 2025). Even in the highest-trust quadrant, the data foundation isn’t always solid.

Why this matters for the trust framework

This quadrant validates transparency trust and workflow trust — two domains most easily satisfied when outputs are reviewable, iteration cycles are fast, and AI fits into existing lab workflows. But it exposes a gap in outcome trust: until an AI-discovered drug reaches patients, the ultimate validation is missing.

What would move this up the matrix

An FDA approval of a genuinely AI-discovered drug. Head-to-head data showing AI-discovered compounds outperforming traditional ones on clinical success rates. Industry adoption of the Pistoia Alliance’s vendor-neutral benchmarking framework (Pistoia Alliance, 2025).

Quadrant 2: Aspiration Zone (High Trust, Close to Patient)

A physician finishes a 15-minute consultation. Before they’ve reached for their keyboard, the AI has drafted the note. They review it, make two edits, and sign. The patient never knew AI was involved — and the physician just saved 8 minutes of documentation.

Where AI has demonstrably delivered value

Ambient scribes are the only AI use case with 100% adoption activity across 43 surveyed US health systems, with 53% reporting high success (Poon et al., 2025). Trust is high because the output is fully visible, editable, and owned by the clinician. AI augments rather than replaces judgment.

Where the trust gap remains

Ambient scribes succeed because they’re administrative, not diagnostic. They document what happened; they don’t tell the clinician what to do. The gap between “write my notes” and “guide my diagnosis” remains enormous. And as these tools scale, questions about whether AI-generated notes faithfully capture nuanced clinical reasoning will intensify. Patients, too, may have concerns — the WEF (2025) argues that patient trust should be a core performance indicator, and patient awareness of AI in their care encounter is still limited.

Why this matters for the trust framework

Ambient scribes validate a critical principle: patient-adjacent AI can earn trust when the human-in-the-loop is genuine, not performative. The clinician reviews, edits, and signs. This maps to governance trust (clear ownership) and transparency trust (visible, editable output). This is the proof of concept for what high-trust, patient-facing AI looks like.

What would move more use cases into this quadrant

Extending the ambient scribes model to clinical decision support: AI that presents reasoning, confidence levels, and uncertainty estimates rather than binary predictions. AI that flags rather than decides, with the clinician making the final call. The common thread: transparency, reviewability, and clear human ownership of the output.

Quadrant 3: Emerging Opportunity (Low Trust, Far from Patient)

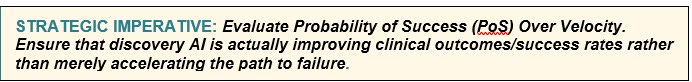

In 2025, the first drug with both its target and molecule designed entirely by AI completed Phase IIa for idiopathic pulmonary fibrosis (Singh, 2026). It was a genuine milestone. And then, in the same year, multiple other AI-designed drugs were deprioritized or shelved after Phase II.

Where AI has demonstrably delivered value

The Phase IIa milestone is real. AI-powered regulatory submission tools can accelerate clinical study report drafting by 40% (McKinsey & Company, 2024). The FDA’s CDER has seen a significant increase in submissions using AI components (FDA CDER, 2025). But these are process efficiencies, not outcome transformations.

Where the trust gap remains

No AI-discovered drug has received FDA approval to date (Singh, 2026). AI-discovered compounds show clinical progression rates similar to traditionally discovered ones — raising the question of whether AI improves clinical success rates or just accelerates the same failure rate (Singh, 2026). Regulatory guidance remains draft-stage, with the FDA publishing its first guidance on AI for regulatory decision-making in January 2025 but the framework still evolving (FDA CDER, 2025).

Why this matters for the trust framework

This quadrant exposes outcome trust and regulatory trust as binary gates. One can’t partially approve a drug. Until these milestones are cleared, the category remains “emerging” regardless of preclinical speed. It also highlights data integrity trust — 68% of CDIOs cite poor data quality as the #1 failure cause (ZS Associates, 2025).

What would move this up the matrix

An FDA approval of an AI-discovered drug. Final regulatory guidance (not draft) with clear expectations. Transparent publication of clinical success rate comparisons.

Quadrant 4: The Trust Deficit (Low Trust, Close to Patient)

Here’s the TRICORDER story in one sentence: an AI stethoscope that doubled heart failure detection sat largely unused because it added steps (an additional 15 seconds to the workflow) and wasn’t connected to the EHR.

Where AI has demonstrably delivered value

At the individual level, the evidence is strong. TRICORDER patients examined with the AI stethoscope were twice as likely to be diagnosed with heart failure and 3.5 times more likely to be identified with atrial fibrillation (Kelshiker, et al., 2026). Imaging AI is deployed in 90% of US health systems (Poon et al., 2025). AI value-add at an individual level is not in question.

Where the trust gap remains

At population scale, adoption collapses. TRICORDER’s primary endpoint showed no significant population-level improvement because clinicians didn’t consistently use the device (Kelshiker, et al., 2026). The clinician override rates of AI diagnostics are revealing: 73.9% clinician override for AI with minimal transparency versus 1.7% for transparent AI (Yu et al., 2025; WEF, 2025). In pharmacovigilance, only 34% of AI devices have post-market surveillance obligations (Torres, 2026). And a Johns Hopkins study found that physicians who rely on AI faced a “competence penalty” — their peers viewed them as less capable (Dai et al., 2025). The cultural stigma compounds the structural barriers.

Why this matters for the trust framework

This is the only quadrant where trust breaks down across nearly every domain simultaneously: transparency (binary outputs, no reasoning/explainability), workflow (adds friction), governance (unclear accountability), regulatory (insufficient post-market surveillance), and outcome (population-level failure despite per-patient success). It’s a system failure — not a single-variable problem. This isn’t a single-variable problem — it’s a system failure, which is precisely why a multi-domain benchmarking framework is necessary for use cases in this quadrant.

What would move this up the matrix

TRICORDER provides the roadmap: 72% of high-utilization practices ranked EHR integration as the most critical enabler (Kelshiker, et al., 2026). Beyond that: AI must provide confidence levels and reasoning chains, not binary outputs. Every system needs a named accountable executive. Pragmatic implementation trials — measuring adoption barriers alongside effectiveness — must become standard.

The Question Nobody Is Asking: Who Owns Trust?

In most pharma organizations, trust falls between functional silos. Data science builds the model. Medical affairs validates it. Regulatory submits it. Commercial scales it. Nobody owns “trust” as an integrated programme.

This is the organizational gap that makes the matrix’s bottom-right (‘The Trust Deficit’) quadrant so persistent. Fixing transparency requires collaboration between data science and medical teams. Fixing workflow integration requires IT and clinical operations working together. Fixing governance requires senior leadership engagement, not delegation to technical teams — Deloitte’s 2026 enterprise survey found that organizations where senior leadership actively shapes AI governance achieve significantly greater business value (Deloitte, 2025).

J&J’s Ashita Batavia described the need for “trilingual” professionals fluent in data science, clinical science, and business strategy — and noted how rare they are (McKinsey & Company, 2025a), “It’s rare to find a person who has practiced medicine, has been a trialist who understands data sciences, and has a business strategy lens. But if a candidate has a couple of those skills, they can upskill and learn the rest” (McKinsey & Company, 2025a). These are the trust brokers pharma needs. But they can’t operate in a vacuum. Trust needs an owner — a named executive accountable for the six-domain framework (see more in Part 2 of this article series), with the authority to convene across functions and the budget to invest in the infrastructure (governance, workflow, transparency) that makes AI trustworthy.

The companies that get this right will be what McKinsey calls the redesigners — organizations that fundamentally rework their operating models with AI embedded across the value chain. The rest will be the tinkerers — running isolated pilots that never scale, yielding low returns and eroding competitive advantage (McKinsey & Company, 2025a).

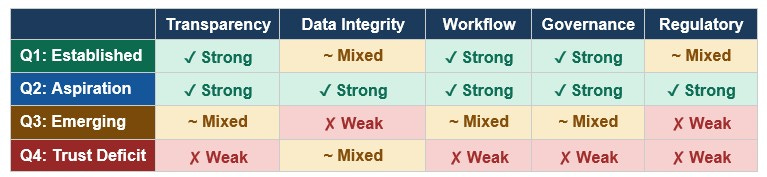

Summary: Trust Domains by Quadrant

How each quadrant performs across five trust domains (✓ Strong ~ Mixed ✗ Weak)

Note: Outcome Trust is omitted from this table because it functions differently — it is the dependent variable that improves as the other five domains strengthen. It is not a domain that can be directly engineered; it is the result of engineering the other five domains well.

What’s Next

The matrix tells a story every pharma AI leader needs to hear: the technology is not the bottleneck. Trust is. And trust breaks down differently in each quadrant, across different domains, for different reasons.

The cost of inaction is quantifiable. Every AI tool that sits unused is value destroyed — for the balance sheet, for the pipeline, and for the patients who could have benefited. The healthcare and pharma industries do not lack for algorithms or investment. They are yet to build the trust infrastructure to let those algorithms deliver impact.

Higher trust → higher adoption → deeper integration → measurable impact.

In Part 2, I’ll introduce the six-domain trust benchmarking framework — with specific metrics, KPIs, and OKRs designed for operational use. Because diagnosing the trust deficit is the easy part. Closing it requires the same rigor we apply to clinical endpoints.

As Howard Jacob put it — we’ve seen this movie before with genomics. The skepticism fades — but only when we earn trust by building the systems that make AI trustworthy.

If this analysis was valuable, please share it with colleagues navigating healthcare AI decisions. Subscribe to The Health Innovation Insider on SubStack for analysis at the intersection of AI, emerging tech, and healthcare leadership —depth, not hype.

Kausar Riaz Ahmed, PhD, MBA, RAC is a pharma AI executive and former FDA regulatory strategist. She writes The Health Innovation Insider — exploring AI and emerging tech transforming healthcare, and the leadership driving innovation. Follow along on LinkedIn and Substack for real-time commentary.

Disclaimers

1. Conflict of Interest Disclosures

This article is intended for educational and thought-leadership purposes. The views and opinions expressed herein are strictly my own and do not necessarily reflect the official policy or position of any affiliated organizations, including past and present employers. Reference to specific products, companies, or entities is for illustrative purposes only and does not constitute an endorsement by the author or her employers.

2. AI Disclosure and Editorial Methodology

I use AI the way I once used research teams: to accelerate the work, not replace the thinking. AI tools supported research and early drafts of this analysis. The strategic framing, synthesis, editorial decisions, and conclusions are my own — shaped by years of work at the intersection of pharma, innovation, and regulatory science. All data has been independently verified. I take full ownership of the final work.

References

BCG. (2025a). The widening AI value gap: Build for the future. https://media-publications.bcg.com/The-Widening-AI-Value-Gap-Sept-2025.pdf

Benchling. (2026). 2026 biotech AI report. https://www.benchling.com/biotech-ai-report-2026

BIO.IT World. (2024, August 7). Howard Jacob on How AbbVie’s ARCH is Unlocking Major Opportunities. Howard Jacob on How AbbVie’s ARCH is Unlocking Major Opportunities

CapeStart. (2024). Life science AI research report. https://www.capestart.com/wp-content/uploads/2024/11/CapeStart-GenAI-Report-Sept.-24.pdf

Chatni, R. (2026, February 16). The “pilot purgatory”: Why 80% of pharma AI projects fail. HIT Consultant. https://hitconsultant.net/2026/02/16/cloudera-life-sciences-ai-scalability-pilot-failure-roi/

Dai, T., Wolf, R., & Yang, H. (2025). Physicians’ perceptions of AI use in clinical decision-making. Johns Hopkins Hub. https://hub.jhu.edu/2025/10/27/doctors-viewed-negatively-for-ai-usage/

FDA Center for Drug Evaluation and Research. (2025). Artificial intelligence for drug development. https://www.fda.gov/about-fda/center-drug-evaluation-and-research-cder/artificial-intelligence-drug-development; https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-enabled-medical-devices

Kelshiker, M. A., Bächtiger, P., et al. (2026). Triple cardiovascular disease detection with an AI-enabled stethoscope (TRICORDER). The Lancet, 407(10529), 704–715. https://doi.org/10.1016/S0140-6736(25)02156-7

McKinsey & Company. (2024, January 9). Generative AI in the pharmaceutical industry: Moving from hype to reality. https://www.mckinsey.com/industries/life-sciences/our-insights/generative-ai-in-the-pharmaceutical-industry-moving-from-hype-to-reality

McKinsey & Company. (2025a, November 6). How pharma is rewriting the AI playbook: Perspectives from industry leaders. https://www.mckinsey.com/industries/life-sciences/our-insights/the-synthesis/how-pharma-is-rewriting-the-ai-playbook-perspectives-from-industry-leaders

McKinsey & Company. (2025b, January 8). AbbVie’s head of genomics research on the transformative power of AI. https://www.mckinsey.com/industries/life-sciences/our-insights/abbvies-head-of-genomics-research-on-the-transformative-power-of-ai

Mehta, V., Komanduri, A., Schulman, K. et al. (2025). Evaluating transparency in AI/ML model

characteristics for FDA-reviewed medical devices. NPJ Digital Medicine, 8, 673. https://doi.org/10.1038/s41746-025-02052-9

Pharmaphorum. (2025, December 11). Garbage in, garbage out: The hidden data crisis in pharma. https://pharmaphorum.com/digital/garbage-garbage-out-hidden-data-crisis-pharma

Pistoia Alliance. (2025). Secure benchmarking of AI solutions. https://www.pistoiaalliance.org/new-idea/secure-benchmarking-of-ai-solutions/

Poon, E. G., Lemak, C. H., Rojas, J. C., Guptill, J., & Classen, D. (2025). Adoption of AI in healthcare. JAMIA, 32(7), 1093–1100. https://doi.org/10.1093/jamia/ocaf065

Singh, R. (2026, February 9). AI in drug discovery: 2025 in review. Drug Target Review. https://www.drugtargetreview.com/article/192951/ai-in-drug-discovery-2025-in-review/

Torres, M. J. S. (2026). Regulatory fragmentation in healthcare AI. IJTMH, 12(1). https://www.ijtmh.com/index.php/ijtmh/article/view/251

World Economic Forum. (2025, December). The trust gap: Why AI in healthcare must feel safe. https://www.weforum.org/stories/2025/12/trust-gap-ai-healthcare-asia/

Yu, Y., et al. (2025). Enhancing clinician trust in AI diagnostics. Diagnostics. [Referenced via WEF, 2025]. Enhancing Clinician Trust in AI Diagnostics: A Dynamic Framework for Confidence Calibration and Transparency

ZS Associates. (2025, November). Scaling AI in pharma and biotech: 2026 CDIO research. https://www.zs.com/insights/scaling-ai-in-pharma-cdio-2026